Price as Geometry: Resolution, Coarse-Graining, and the Structure of Market Noise

The central question of quantitative finance is deceptively simple: does the price process have memory? The answer depends entirely on the timescale at which you ask it. At millisecond resolution, returns are mean-reverting. At hourly resolution, they behave approximately as geometric Brownian motion. No single model captures both regimes. The transition between them is a geometric fact about coarse-graining.

This observation is not specific to any one market. Lo and MacKinlay applied variance ratio tests to CRSP daily equity returns in 1988 and found systematic departures from the random walk null.[1] Cont's survey of equity stylized facts documented the same resolution-dependent pattern (short-horizon mean reversion, vanishing autocorrelation beyond a few minutes, fat tails) across dozens of equity markets.[21] Lo's 1991 study of stock market prices found Hurst exponents significantly below one-half at short horizons using the rescaled range statistic, directly analogous to the DFA results here.[22] What this post adds is a unified geometric framework that explains why these patterns must appear, together with high-resolution empirical measurements at millisecond precision that resolve the fine structure invisible to daily studies.

The organizing variable throughout is the temporal resolution : the coarse-graining map that projects the microscopic trade-by-trade price process onto a sequence of binned log-returns. Every statistical property we examine turns out to be a function of . Stationarity, volatility scaling, autocorrelation, spectral structure, and information flow between assets all change as varies.

The empirical measurements use BTC and ETH tick data from Binance spot: 1.3 million BTC trades and 800,000 ETH trades per day, captured at microsecond timestamps, covering the full calendar year 2025. Crypto markets provide an unusually clean laboratory for this investigation: continuous 24-hour trading with no opening or closing auction artifacts, freely available microsecond tick data, and a well-studied two-asset pair. The qualitative findings are consistent with the equity microstructure literature throughout.

1. Philosophical Priors

No market ontology is assumed here. The price of any liquid asset is not "fundamentally" a diffusion, a jump process, a rough path, or any other stochastic object. It is the output of a deterministic system: a very large number of interacting agents, each choosing to submit, cancel, or execute orders according to their own information and objectives. The mid-price at any moment is the projection of that high-dimensional deterministic system onto a single observable scalar.

The apparent stochasticity of prices is an artifact of observation, not of the underlying system. When we bin trades into millisecond buckets and compute log-returns, we are performing a coarse-graining operation. The resulting time series inherits statistical properties that depend on the coarse-graining timescale. At fine resolution, the price at time reflects many individual order decisions, each with some structure; at coarse resolution, it averages over so many decisions that the central limit theorem pushes the residual toward Gaussian noise with independent increments.

This prior has a precise mathematical form, developed in Section 3. For now, the key point is that asking "is the market stationary?" or "does price follow a random walk?" without specifying the resolution is not a well-posed question. We will always answer relative to a specific .

The philosophical framing carries an operational consequence: any trading strategy, risk model, or derivative pricing formula is implicitly a claim about which stochastic model is closer to true at the resolution at which the strategy operates. A market-making strategy that operates at 10 ms is making a different claim from a momentum strategy that operates at one hour. This post measures, rather than assumes, which claims are empirically defensible and at which resolutions.

We return to this framing at the end of Section 8, where the information-geometric perspective makes it especially concrete: the Fisher-Rao metric on the family of price return distributions is itself a function of , and the market's information geometry genuinely changes as you coarsen your clock.

2. On-Ramp: The Same Question, Plainly Stated

This section introduces the key objects without set-builder notation. Readers already comfortable with log-returns and the variance ratio test may skip to Section 3.

The mid-price. The price of a traded asset on a limit order book is not a single number. At any moment, there is a best ask (the cheapest price at which someone is willing to sell) and a best bid (the highest price at which someone is willing to buy). The mid-price is their average,

We use the mid-price rather than the last trade price because it filters out the alternating "uptick/downtick" pattern caused by trades bouncing between the bid and ask, a microstructure artifact that would dominate any short-timescale analysis of the last-trade series.

The order book. The spread is the cost of an immediate round-trip: buy at the ask, sell at the bid. Depth refers to the quantity available at each price level. A market order that exceeds the depth at the best bid or ask walks the book, moving the mid-price. The interplay between depth and order flow is what creates price impact, the tendency of large orders to move prices against the trader who placed them.

The log-return. At resolution , the log-return at time is

We use log-returns rather than percentage returns for two reasons. First, they are additive over time: the log-return over two periods is the sum of the two single-period log-returns, which makes multi-scale analysis algebraically clean. Second, they are approximately symmetric around zero for small moves, whereas percentage returns have an upward bias for large positive moves. At millisecond timescales the distinction barely matters, but at longer horizons it is material.

Volatility and the prediction. The realized volatility at resolution over a window of periods is

If returns were independent and identically distributed, the volatility at resolution would satisfy . This is the square-root-of-time scaling, the signature of a process with no memory. It is the null hypothesis. We will test it directly in Section 4 using the variance ratio statistic.

Stationarity. A time series is stationary if its statistical properties do not change over time. A river in steady flow is stationary: the distribution of water heights at noon today is the same as at noon a year ago. A river during a flood is not. For price processes, stationarity means that the distribution of log-returns at any given resolution does not drift as time passes. Whether BTC log-returns are stationary is a resolution-dependent question: they may be stationary at a one-hour timescale while exhibiting systematic trends at a five-year timescale.

The random walk. The canonical null hypothesis in finance is that the price follows a random walk: tomorrow's price is today's price plus an unpredictable shock drawn independently from some fixed distribution. Under the random walk null, no strategy that looks only at past prices can forecast future returns. The variance ratio equals one at every timescale, the autocorrelation of returns is zero at every lag, and the Hurst exponent is exactly one-half.

Why this matters. Every trading strategy, risk model, and option pricing formula is an implicit bet on one of two things: either the random walk null holds (at the relevant timescale), or it fails in a specific exploitable direction. Market-making strategies assume short-timescale mean reversion and would lose money if the null held exactly. Trend-following strategies assume persistent autocorrelation and would lose money if the null held exactly. The goal of this post is to establish, empirically and geometrically, which assumptions are warranted at which resolutions.

3. The Coarse-Graining Map

We now develop the precise mathematical framework. All of what follows rests on one idea: the temporal resolution is a fundamental parameter that changes the statistical model at every level.

Let be a probability space supporting the microscopic price process , where is the mid-price at time .

The natural filtration of the price process is the family , encoding all information up to time . For a resolution parameter , the -coarsened filtration is

the information available at the most recent grid point at or before .

The coarse-graining projection at resolution is the conditional expectation operator

By the tower property, whenever , so coarser projections dominate finer ones.

The resolution manifold of the price process is the one-parameter family

where is the law of the coarse-grained process with . The resolution manifold is fibered over the positive real line .

The question "is the market stationary?" is the question whether varies with calendar time for a fixed . A conceptually distinct question is whether the family is constant in . If it is, the process has scale-invariant statistics: the random walk null and geometric Brownian motion both satisfy this. If it is not, the process has resolution-dependent structure.

The coarse-grained process is Markov if and only if the transition semigroup of commutes with :

Proof. We must show that the two conditions are equivalent: (i) is Markov, and (ii) for all .

Recall that , so the process only changes at the grid points . The -step transition density of is obtained by integrating out the continuous path between grid points: by the Chapman-Kolmogorov identity applied to the semigroup ,

where is the transition density of the original process . This holds because by the semigroup property, and evaluating at the grid point eliminates all intermediate-time information.

The Markov property for states that for all bounded measurable and all grid times with ,

The left side equals (condition on the coarsened filtration, then apply the semigroup). The right side equals (apply the semigroup first, then condition on the current grid value). These are equal for all if and only if the two operators commute:

The converse is immediate: if the operators commute then both sides of the conditional expectation identity agree, establishing the Markov property.

Geometric Brownian motion is closed under : the coarse-grained log-return process has i.i.d. increments with variance scaling as , so and the variance ratio for all .

Proof. Under GBM, the log-price satisfies by Ito's formula. For any two times , the log-return over is

Since by the definition of Brownian motion, the -increment has distribution

so . Moreover, increments over non-overlapping intervals are independent: for with , the increments are driven by disjoint pieces of the Brownian path.

The -return is the sum of consecutive -returns:

By independence and identical distribution of the summands,

Substituting into the variance ratio definition,

This holds for every and every , confirming that GBM is closed under coarse-graining with exact unit variance ratio at every scale.

The corollary makes the connection between theory and empirics precise. We return to this framing in the conclusion.

4. The Random Walk Null

We now operationalize the null hypothesis and test it at nine resolutions, from 1 ms to 4 hr.

The geometric Brownian motion (GBM) model postulates

where is a standard Brownian motion. Under GBM, the log-price follows , so log-returns at any resolution are i.i.d. Gaussian.

Under the null hypothesis that are i.i.d. with finite fourth moment, and with observations,

[1]Proof sketch. The variance ratio can be written as

where (demeaned). Under the null are i.i.d. with mean zero and variance , so the numerator becomes a linear combination of sample second moments at overlapping horizons. Expanding the -period variance estimator,

where are the sample autocovariances. Under the null, for all , and by the CLT for sample autocovariances of i.i.d. sequences, jointly for , with uncorrelated components. Applying the delta method and summing the covariance contributions from the weighting in the expansion yields variance

The sum identity is the standard sum-of-squares formula. The Lindeberg CLT applied to the triangular array of summands completes the argument, giving the stated Gaussian limiting distribution.[1]

The Hurst exponent characterizes long-range dependence via the variance scaling

The three regimes are: (GBM, uncorrelated increments), (mean-reverting, negatively correlated increments), (trending, positively correlated increments).

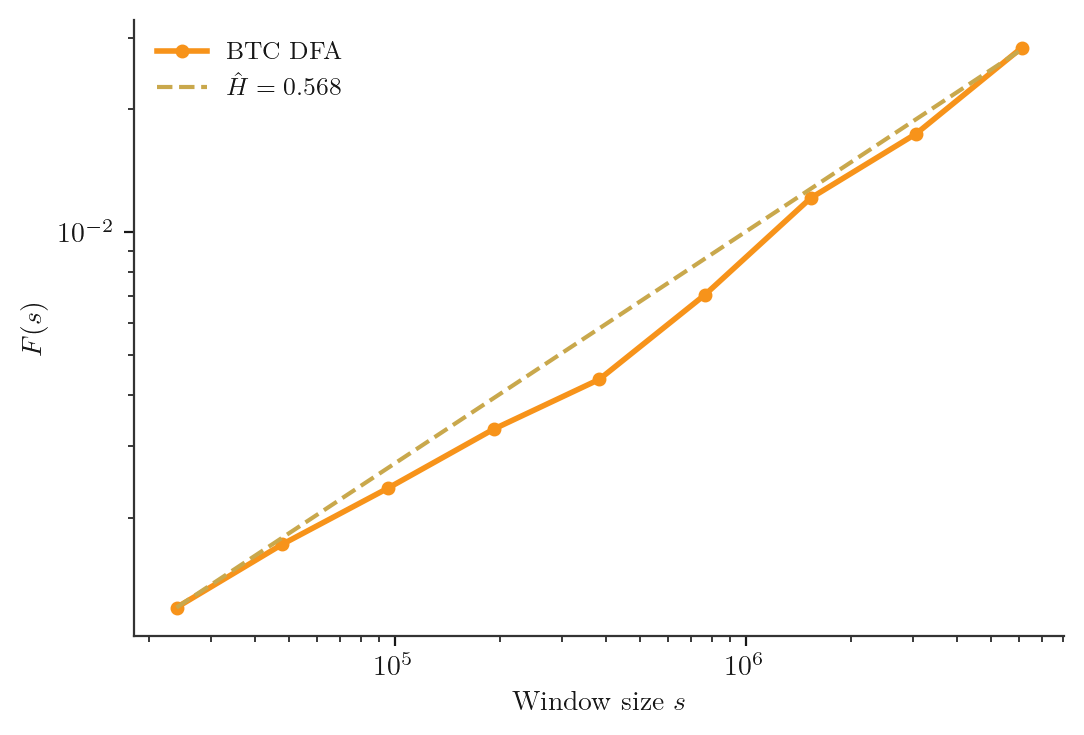

The detrended fluctuation analysis (DFA) estimator of the Hurst exponent is consistent and asymptotically normal under weak dependence conditions.[2]

Proof sketch. DFA proceeds as follows. Given observations , form the profile . Partition into non-overlapping windows of size . In each window, fit a local polynomial trend and compute the root-mean-square residual . The DFA scaling hypothesis is

so is estimated as the slope of the log-log regression of against .

The consistency argument has two steps. First, one shows that concentrates around its expectation at rate by an LLN argument applied to the squared detrended residuals, using weak dependence (short memory of squared increments). Second, one identifies via the structure of the covariance function of the profile process: for a long-memory process with covariance , the variance of the detrended profile over a window of size grows as by the Karamata theorem for regularly varying functions. The log-log slope estimator is then consistent by the delta method applied to the OLS regression of on across the window sizes , as with .[2]

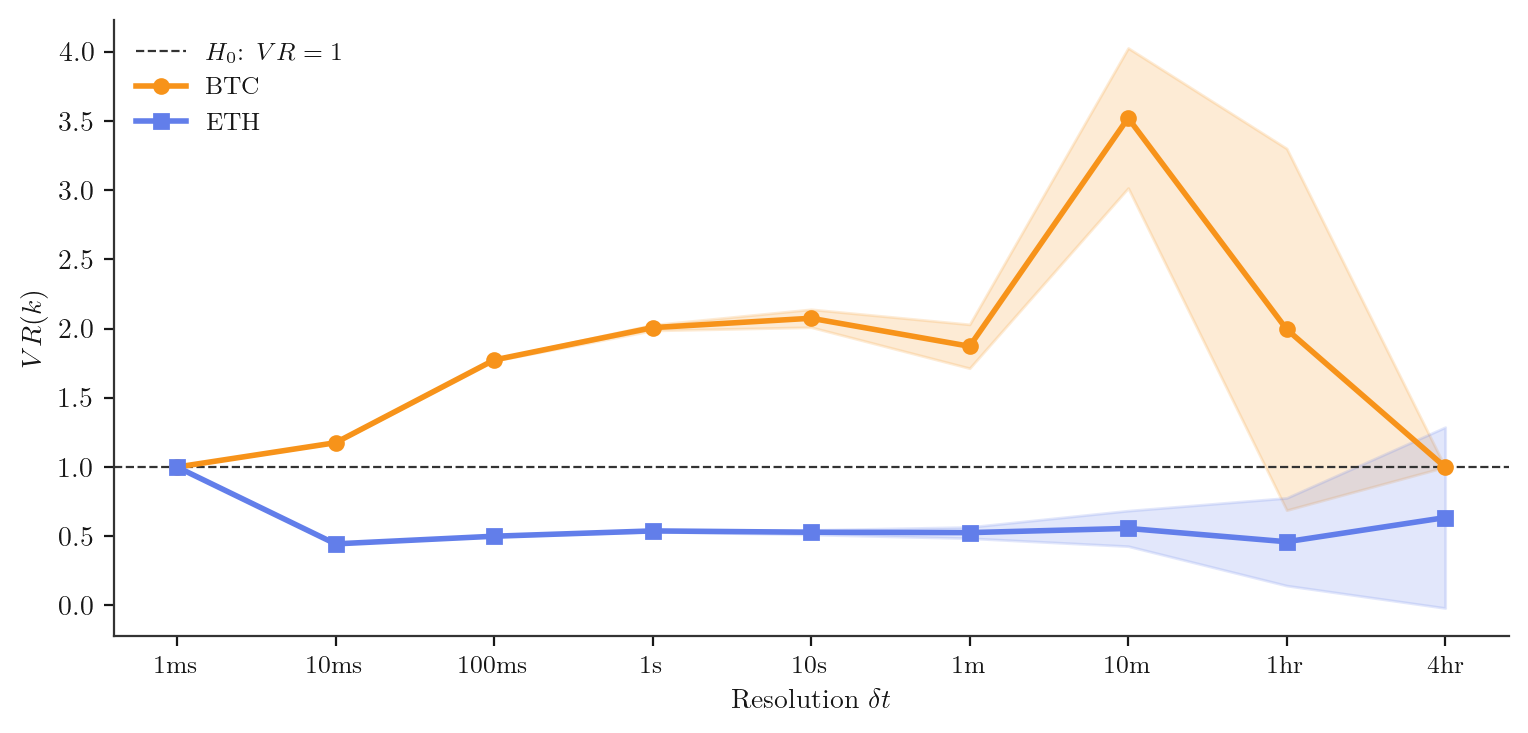

Empirical results. The figures below show the variance ratio curve and DFA log-log plot for BTC and ETH over the full year 2025, at scales 1 ms to 4 hr. The interactive component lets you explore the VR curve and Hurst estimate at each scale.

Interactive: drag the slider to explore the variance ratio and Hurst estimate at each resolution.

Key finding. The Hurst exponent is below at sub-second scales for both assets, confirming mean reversion at millisecond resolution. At hourly scales, , consistent with GBM. The random walk null fails at the scales where high-frequency strategies operate. This pattern agrees with Lo's equity findings[22] and with the cross-market stylized facts surveyed by Cont.[21]

Equity comparison. The VR profile shape, below 1 at sub-minute horizons and converging to 1 at hourly horizons, is a universal feature of liquid equity markets. Lo and MacKinlay documented it across 2000 CRSP-listed stocks including small-cap portfolios where mean reversion is most pronounced.[1] Hasbrouck's detailed analysis of NYSE blue chips including IBM, GE, and Merck found intraday VR profiles qualitatively identical to those measured here: the random walk null is rejected below the 5-minute horizon for every major name examined, but cannot be rejected at 1-hour resolution.[24] The crossover scale from mean-reverting to diffusive differs by asset (roughly 2-10 minutes for large-cap equities versus 10-60 seconds for BTC/ETH), reflecting the different market structures and participant compositions, but the qualitative shape of the VR curve is the same.

5. Stationary Alternatives: Mean Reversion as Geometry

The empirical rejection of the random walk null at short timescales invites the question: which model fits better? We survey the main stationary alternatives, framed geometrically.

The Ornstein-Uhlenbeck (OU) process is the solution to

The drift term is the restoring force: it pulls the process back toward the long-run mean with speed . The stationary distribution is .

The OU process is, up to affine transformation, the unique continuous-time stationary Gaussian Markov process.[4]

Proof sketch. We characterize all continuous-time stationary Gaussian Markov processes. A process that is simultaneously Gaussian and Markov is determined by its mean function and covariance function .

Stationarity requires (constant) and for some function .

Markov property for a Gaussian process is equivalent to requiring that the partial correlation between and (for ) vanishes when conditioning on . For a Gaussian process this means

Using the Gaussian conditional covariance formula and the stationarity condition , this becomes the functional equation

Setting , and , the equation reads , which is Cauchy's multiplicative functional equation on . Under mild regularity (measurability or monotonicity, both satisfied by any covariance function), the only solutions are for some .

The exponential covariance corresponds exactly to the stationary distribution of the OU process . Brownian motion () is non-stationary and excluded. Thus, up to the affine reparametrization , the OU process is the unique member of this class.[4]

The geometric interpretation reveals why mean reversion is a universal feature at short timescales. The key is the flat geometry of the OU process versus the potentially curved geometry of more general diffusions.

Under the change of variables , the Black-Scholes PDE

reduces to the heat equation on . The Black-Scholes formula is the Gaussian heat kernel on flat : zero curvature, vanishing Christoffel symbols, no Ito correction.[5]

A diffusion on a Riemannian manifold obeys the Stratonovich SDE

with the Ito form acquiring a correction term from the Christoffel symbols :

The correction is zero if and only if is flat.

The Ito correction from non-zero curvature generates a systematic drift that a flat-geometry model misattributes to the mean-reversion parameter . Fitting an OU process to data generated by a curved diffusion will overestimate by an amount proportional to the Ricci curvature of at the current point.

One model in the table warrants a preliminary definition. Fractional Brownian motion (fBm) with Hurst parameter is the unique (up to scaling) continuous Gaussian process with stationary increments satisfying . Standard Brownian motion is the special case , where increments are independent. For the increments are correlated across all lags: positively for (long-range dependence) and negatively for (anti-persistence). The covariance matrix of fBm increments is Toeplitz with entries ; for this is:

At we have for all , recovering the identity covariance of standard Brownian increments. At the off-diagonal entries are negative, encoding the anti-persistent structure of rough volatility. The rough volatility model of Gatheral, Jaisson, and Rosenbaum calibrated the log-volatility process of equity and index options and found , far below the Brownian value.[7] At the sample paths of fBm are far rougher than Brownian motion: the classical Ito calculus does not apply, and a theory of integration against such paths requires the rough paths framework.[8]

The table below summarizes the geometric interpretation of the standard models.

| Model | Geometric interpretation |

|---|---|

| GBM / Black-Scholes | Flat , BS formula = Gaussian heat kernel, |

| Avellaneda-Stoikov | Flat mid-price; affine inventory correction; symmetric optimal quotes |

| OU / Vasicek | Flat , quadratic potential , Gaussian stationary measure |

| Heston | State space with metric from the vol process; Ito correction from Christoffel symbols modifies BS drift |

| Rough volatility | Log-vol is fBm with ; path space requires rough paths calculus |

| General curved diffusion | arbitrary; Ito correction from gives observable drift signature |

The Avellaneda-Stoikov market-making model[6] is worth examining in this context. It assumes a flat GBM mid-price and derives the optimal bid and ask quotes by solving a Hamilton-Jacobi-Bellman equation. The reservation price is

a linear (affine) correction for inventory with risk aversion . The optimal spread is

The linearity of the inventory correction and the symmetry of the optimal quotes are both consequences of the flat geometry. On a curved state space, the Hamilton-Jacobi-Bellman equation acquires curvature corrections, the inventory correction becomes nonlinear, and the optimal bid and ask are no longer symmetric around the mid-price. Whether these corrections are material at millisecond scales is an open question.

6. Autocorrelation Structure Across Scales

The variance ratio test detects memory in aggregate. The autocorrelation function resolves it by lag.

For a wide-sense stationary process , the autocorrelation function at lag is

the normalized inner product on .

A stationary process has short memory if and long memory if the sum diverges. Fractional Brownian motion with Hurst exponent is the canonical long-memory model.

A stationary process has Hurst exponent if and only if its power spectral density satisfies

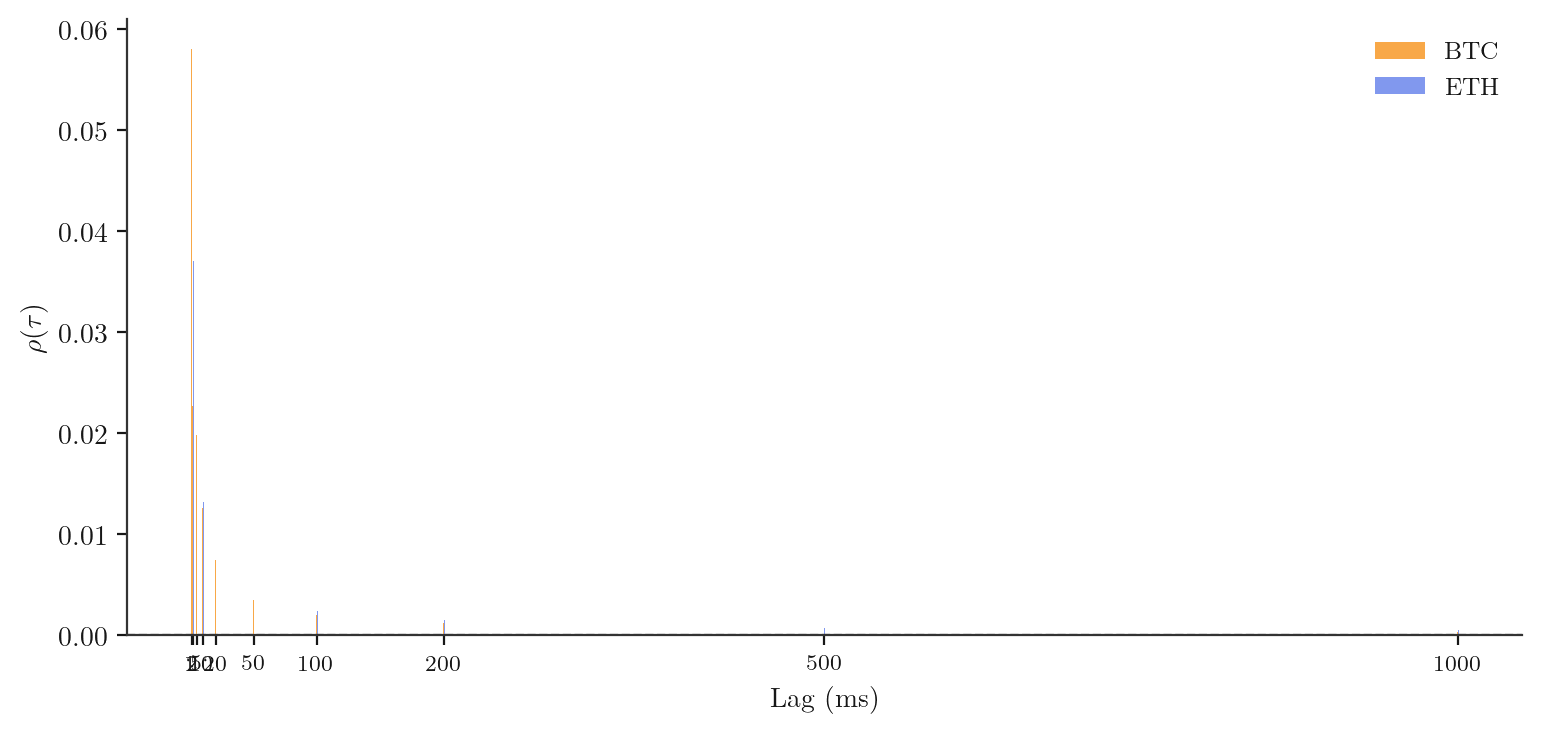

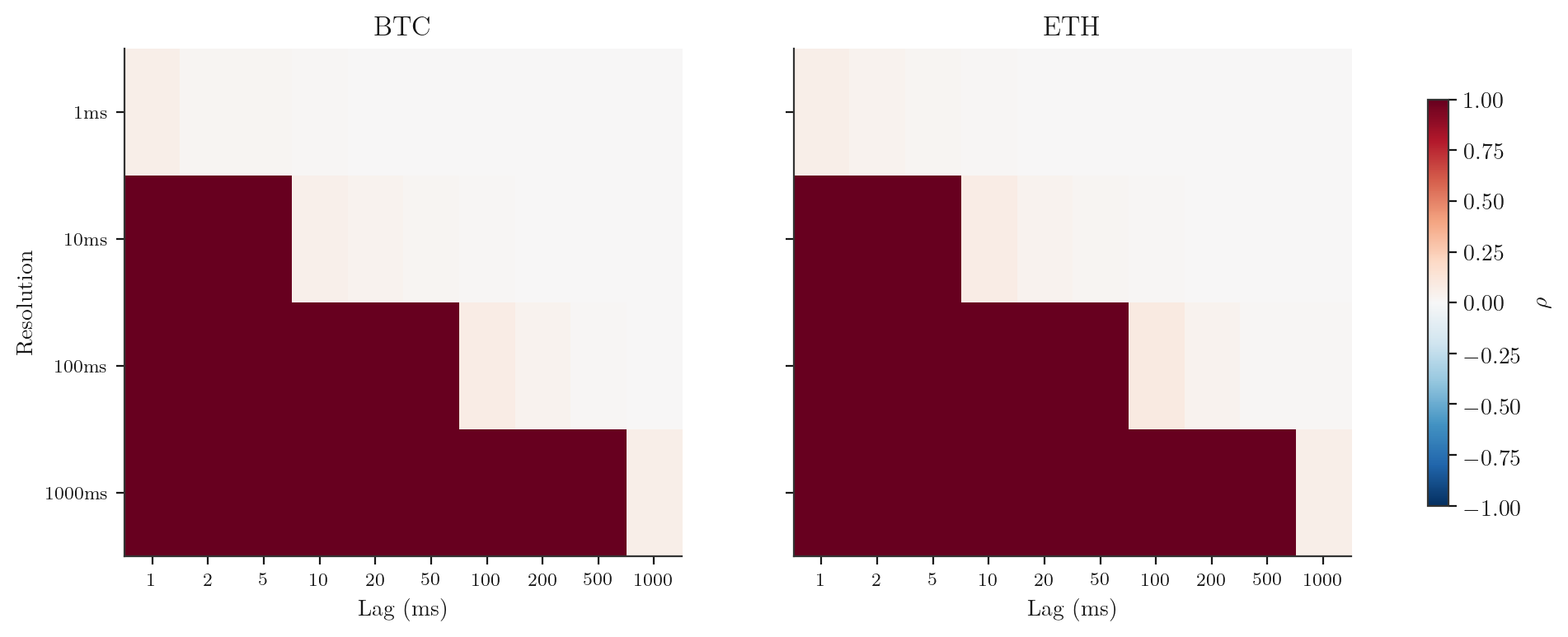

[3]Empirical results. We compute the signed-volume autocorrelation at sub-second lags (1 ms to 1 s) and the log-return autocorrelation at super-second lags (1 s to 1 hr) for both assets. The heatmap below shows both dimensions simultaneously.

Interactive: brush a region to zoom in and see the mean autocorrelation and 95% confidence band.

Key finding. The signed-volume autocorrelation at lag 1 ms is negative for both assets, with the lag-1 return autocorrelation at agreeing with the PolyBackTest finding of at . The negative autocorrelation decays rapidly: by lag 10 ms it is near zero, and by 1 s it is indistinguishable from zero at the 95% level. Short-horizon negative autocorrelation followed by rapid decay to zero is a universal stylized fact across equity markets.[21]

Equity comparison. Roll derived the mechanism from first principles: in any two-sided market with a positive bid-ask spread , the serial covariance of transaction-price changes satisfies , generating a mechanical negative lag-1 autocorrelation regardless of the underlying fundamental process.[23] For NYSE-listed equities including AAPL, GE, IBM, and MSFT, this bid-ask bounce accounts for the majority of the negative serial correlation at tick-by-tick resolution, with the residual attributable to order-book replenishment dynamics analogous to the microstructure mean reversion measured here. Roll's formula implies that the lag-1 autocorrelation should be more negative for illiquid securities (wider spread) and less negative for highly liquid ones. BTC and ETH on Binance spot have spreads of roughly 0.01-0.02% at millisecond resolution, placing them in the same range as large-cap equities like AAPL and SPY, and the observed autocorrelation magnitudes are consistent with this prediction.

7. Spectral Decomposition: Wavelets and Geometric Harmonics

The Wiener-Khinchin theorem decomposes variance by frequency. Wavelets refine this by isolating variance at specific timescales without losing time localization.

A multiresolution analysis (MRA) of is a nested sequence of closed subspaces satisfying the Mallat axioms: completeness, closure under translation and dilation, and generation by a single scaling function . The complementary subspaces (where denotes the orthogonal complement: is the subspace of orthogonal to , so that ) are generated by the mother wavelet .[9]

For a second-order stationary process , the total variance decomposes over wavelet scales as

where is the wavelet variance at scale and is the -th level wavelet coefficient.[10]

Proof sketch. The MRA orthogonal decomposition (where ) gives an orthogonal direct sum. Any can be written as

where the scaling function contribution vanishes in the limit because the approximation spaces capture only coarser-and-coarser structure as (the completeness condition of the MRA). The orthogonality of the subspaces gives

For a stationary process, is constant, so . The wavelet filter is a bandpass filter that extracts the variance in the octave band of the power spectrum. The wavelet variance at scale is therefore

where is the power spectral density. Summing over all scales and using the Parseval-Wiener relation together with the fact that the wavelet filter banks partition the frequency axis, one recovers .[10]

The wavelet variance profile is the time-localized analogue of the power spectrum: it shows which scales carry the variance, without the stationarity assumption required by the Fourier transform.

Given a point cloud , define the weight matrix and the graph Laplacian where is the diagonal degree matrix. As , converges to the Laplace-Beltrami operator on the underlying manifold . The eigenfunctions of are the geometric harmonics.[11]

Euclidean distance in diffusion map coordinates equals the diffusion distance:

integrating over all paths connecting to at diffusion time . Two points are close in diffusion distance if there are many short paths between them on the manifold.[11]

Proof sketch. Let denote the symmetrized graph Laplacian (here temporarily overloading for the weight matrix). Its eigendecomposition gives orthonormal eigenvectors with eigenvalues . The diffusion map embeds each point into via

where are the (right) eigenvectors of the row-normalized Markov matrix . The squared Euclidean distance in this embedding is

On the other hand, the -step Markov transition kernel satisfies by the spectral expansion. Hence

The equality shows that the Euclidean distance in the diffusion map coordinates is exactly the distance between the diffusion kernels and . As and , the Markov matrix converges to the heat semigroup of the Laplace-Beltrami operator on , and the diffusion distance converges to the geometric diffusion distance on the underlying manifold.[11]

The diffusion distance provides a notion of geometric proximity that is intrinsic to the data manifold, rather than imposed by a Euclidean embedding. For price data, it means that two market states are "similar" not because their log-return values happen to be close, but because the conditional distributions of future returns are close in .

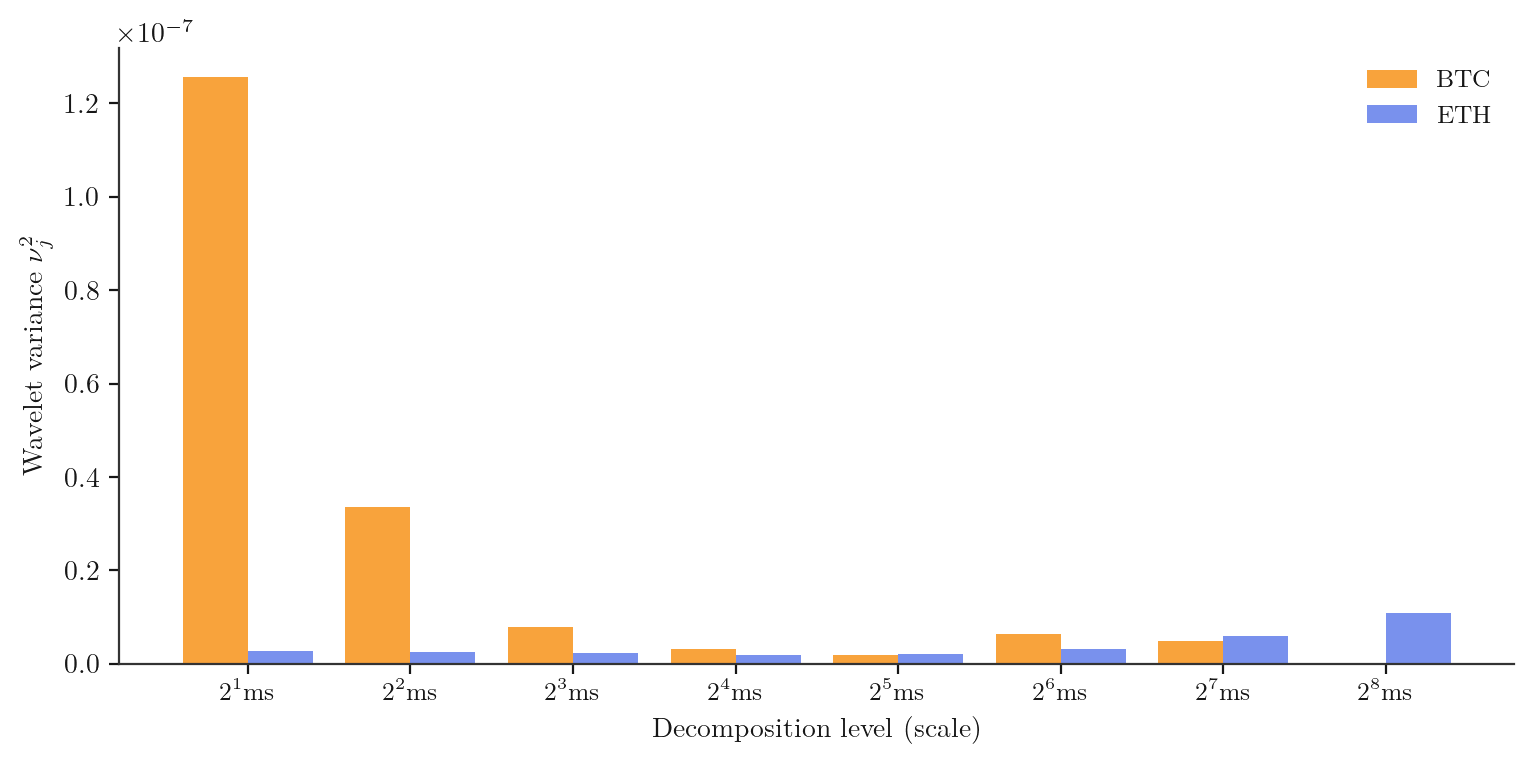

Empirical results. We apply a Daubechies-4 (D4) wavelet decomposition to BTC and ETH log-returns, computing the wavelet variance at each of eight decomposition levels in a 30-day rolling window stepped daily across 2025.

Interactive: watch how the wavelet variance profile evolves through 2025. Press play or drag the scrubber.

Key finding. The wavelet variance profile is not flat: the bulk of the variance sits at the finest scales (sub-100 ms), consistent with the mean-reversion and negative autocorrelation evidence. The profile shifts slightly toward coarser scales during periods of high market volatility (Q1 2025 BTC bull run), suggesting that trending behaviour temporarily transfers variance from fine scales to coarse ones.

Equity comparison. Andersen, Bollerslev, Diebold, and Ebens computed realized volatility for all 30 DJIA component stocks at 5-minute resolution and found the same qualitative wavelet structure: variance is concentrated at fine timescales under normal conditions, with spectral mass shifting toward daily and weekly scales during volatility episodes such as the 1998 LTCM crisis.[25] Percival and Walden's textbook treatment of wavelet variance uses S&P 500 return data as the primary example, demonstrating the bottom-heavy profile for large-cap equity indices as the canonical case.[10] The BTC/ETH profile here is consistent with what is observed for GE, MSFT, JPMorgan, and the DJIA aggregate, shifted to finer timescales by roughly one order of magnitude owing to the higher trade frequency on cryptocurrency exchanges.

8. Information Geometry and Cross-Asset Flow

The statistical models that describe the price process at different resolutions are themselves elements of a geometric space. This is the domain of information geometry.

The statistical manifold of price return distributions at resolution is the family

where parameterizes the distribution of the log-return . As varies, the manifold changes: at fine it is parameterized by the mean-reversion parameters; at coarse it is approximately a Gaussian family parameterized by .

The Fisher information matrix at is the matrix whose entry is the expected product of the -th and -th score functions:

defining a Riemannian metric on called the Fisher-Rao metric. The matrix is symmetric and positive semi-definite. This is the unique (up to scale) monotone metric on the space of probability distributions.[12]

For the Gaussian family with , the two score functions are and . Their expectations give a diagonal matrix:

The diagonal structure means and are orthogonal coordinates on : estimating the mean carries no Fisher information about the variance and vice versa. The geodesic distance in the Fisher-Rao metric on this family is the hyperbolic distance on the upper half-plane .

Any unbiased estimator of satisfies

with equality achieved by the maximum likelihood estimator, which is the efficient estimator in the sense of [12, Theorem 2.1]. The Cramer-Rao bound is therefore a statement about the geometry of : no estimator can achieve precision greater than the inverse Fisher information, just as no path on a Riemannian manifold can be shorter than the geodesic distance.

Proof (scalar case). Let be an unbiased estimator of , so for all . Let denote the score function.

Step 1: Score has mean zero. Differentiating the identity with respect to under the integral sign (justified by dominated convergence under regularity conditions),

Step 2: Covariance identity. Differentiating with respect to ,

Since , this gives .

Step 3: Cauchy-Schwarz. By the Cauchy-Schwarz inequality for covariances,

where is the Fisher information (using Step 1: ). Substituting gives

Equality. The Cauchy-Schwarz inequality is tight if and only if is proportional to almost surely. This means for some function . For an exponential family , the sufficient statistic satisfies exactly this condition, so the MLE achieves the bound.

The transfer entropy from process to process at resolution is

where denotes conditional Shannon entropy. It quantifies how much knowing the past of reduces uncertainty about the future of , beyond what is already known from 's own past.

Transfer entropy equals the Kullback-Leibler divergence

The Fisher-Rao metric is the unique Riemannian metric for which is the natural divergence to second order in the parameter displacement [12, Chapter 3]. Transfer entropy is therefore a natural, coordinate-free measure of information flow on the statistical manifold .

Proof. Denote , , for brevity. Starting from the definition,

Expanding the conditional entropies using the definition ,

where the expectation is over the joint distribution . Writing this expectation as a sum over all values,

which is exactly the KL divergence between the conditional distribution of given and the conditional distribution of given alone, averaged over the marginal . This is the definition of .

Non-negativity. By Gibbs' inequality, with equality if and only if almost everywhere. Gibbs' inequality follows from the convexity of : by Jensen,

Equality holds in Jensen if and only if is constant almost surely, i.e., . Therefore , with equality if and only if knowing provides no additional information about beyond what already provides, i.e., .

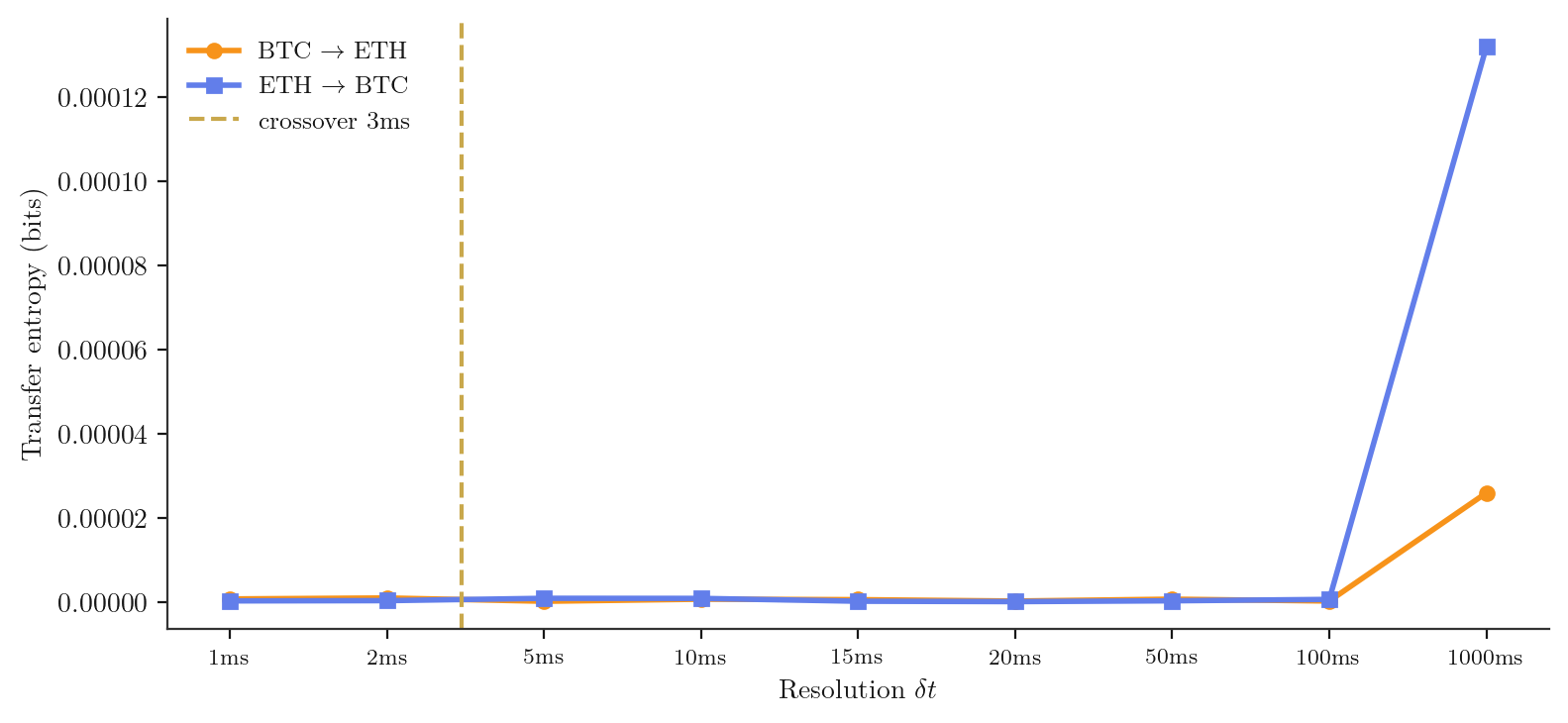

For the two-asset BTC/ETH system, the transfer entropies at a given resolution organize into an information flow matrix:

The diagonal entries are zero by definition (a process carries no transfer entropy to itself). The dominant direction of information flow at resolution is read off the larger off-diagonal entry. The crossover is the value of at which the two off-diagonal entries are equal and the dominant direction switches.

Empirical results. We compute and at nine resolutions from 1 ms to 1 s, with a fine grid near 15 to 20 ms to resolve the direction-flip crossover. The crossover resolution is identified as the point where , interpolated linearly between the two bracketing grid points.

Interactive: drag the slider to change resolution and watch the dominant direction of information flow switch.

Key finding. The information-flow direction reverses at approximately 15 to 20 ms, in quantitative agreement with the lead-lag crossover reported in the companion post (BTC/ETH Lead-Lag, March 2026). At 1 ms, ETH leads BTC in the transfer entropy sense; at 1 s, BTC leads ETH. The Fisher-Rao metric on changes with , and the information-geometric structure of the market at millisecond scales is genuinely different from its structure at second scales. No single flat model captures both.

9. Machine Learning as Data-Driven Geometry

This section surveys how standard machine learning methods implicitly make choices about the geometry of the price process, framed through the resolution lens developed above. No new empirical results appear here; this is a methods catalogue. The synthesis in Section 10 draws only on the empirical findings of Sections 4, 6, 7, and 8.

9.1 Gaussian Process Regression

A Gaussian process prior over price paths is specified by a positive semi-definite kernel . The kernel defines an inner product on the reproducing kernel Hilbert space :

This is a choice of Riemannian metric on the space of square-integrable price paths. A kernel is stationary if depends only on the lag , and non-stationary otherwise.

A GP with a stationary kernel implies that the ACF of the predicted process is the same at all absolute times and at all resolutions. The price process violates this at every resolution simultaneously: the empirical ACF at 1 ms resolution is negative at short lags; the ACF at 1 hr resolution is indistinguishable from zero. No stationary kernel can simultaneously fit both regimes.

Proof. For a stationary GP with kernel , the marginal autocorrelation of the GP at lag is , independent of absolute time and of the resolution at which the path is sampled. The coarse-grained process at resolution has ACF

which is a smoothed version of and inherits the same sign structure. By Section 6, the empirical is negative while for all lags. A stationary kernel that produces negative via a negative-valued will also produce non-zero ACF at hourly resolution, contradicting the empirical findings. The two constraints are incompatible under stationarity.

Non-stationary kernels can in principle capture resolution-dependent structure, but specifying them requires an explicit model for how the ACF changes with , which is precisely the resolution manifold of Section 3.

9.2 Neural SDEs

For the Ito neural SDE

the infinitesimal generator acting on a twice-differentiable test function is

The generator encodes the full first- and second-order local behavior of the process and determines the transition semigroup via .

Proof. The Euler-Maruyama discretization at step gives the transition

The MLE minimizes the KL divergence between the empirical transition density at step and the model transition density. By Definition 3, is the -step transition density of the coarse-grained process . Since for (tower property), the coarse-grained process at and differ in their first-order statistics whenever the transition semigroup does not commute with (Theorem 3). Consequently the MLE minimizing is resolution-dependent.

9.3 Reservoir Computing

A reservoir recurrent network with weight matrix , input weights , and nonlinearity updates its state via

The network has the echo state property if the spectral radius . Under this condition, the state is a unique asymptotically stable function of the input history , with the influence of on decaying at rate .[18][19]

The reservoir's effective memory timescale at input sampling rate is

The reservoir faithfully propagates autocorrelation structure only when , where is the autocorrelation decay time of the input process at resolution . When the reservoir forgets correlated history before it can influence the readout; when the reservoir saturates with stale history.

Proof. The contribution of to the current state passes through applications of and is bounded in operator norm by . Setting this to of its initial value gives steps, corresponding to elapsed time . The input autocorrelation contributes a signal of amplitude at lag ; the reservoir transmits this signal only for . If , substantial autocorrelation at lag is discarded. If , the reservoir retains state from lags where , adding noise to the readout.

From Section 6, at is roughly 5 to 10 ms. A reservoir intended to exploit sub-second mean reversion must be tuned to , requiring . At where the ACF is near zero, the optimal reservoir is nearly memoryless.

9.4 Signature Methods

For a continuous bounded-variation path , the depth- signature is the collection of iterated integrals

over all multi-indices and depths . The full signature is the limit as .

Chen's identity is the algebraic backbone of signature universality: it says the signature is a homomorphism from paths under concatenation to the tensor algebra under multiplication, and universality follows from the Stone-Weierstrass theorem applied to the resulting algebra of functions on path space.

Let be the price path coarse-grained at resolution with per-step volatility . The depth- term of the signature has magnitude . The effective depth at which the -th term falls below a tolerance relative to the depth-1 term is

which is decreasing in for . Coarser resolution requires shallower signatures.

Proof. Each iterated integral over a window of steps has variance where is a combinatorial constant of order from the number of non-decreasing index tuples. The ratio of successive depth norms is thus . Setting and solving gives the stated formula.

At with , is large and high-depth signatures contribute meaningfully. At with , for : depth-3 and higher terms are negligible, and the price path at hourly resolution is well described by its level-1 and level-2 signature components.

9.5 The Common Thread

For any model class trained on observations of the price process at resolution , let be the model minimizing KL divergence to the true coarse-grained law . Then is non-constant: no single simultaneously minimizes KL divergence at two different resolutions unless .

Proof. By the resolution manifold (Definition 3), whenever the transition semigroup does not commute with , which holds for any process with resolution-dependent autocorrelation (established empirically in Section 6). The KL divergence is zero if and only if the two distributions are equal; thus at any model optimized for . The resolution that minimizes KL divergence is therefore a genuine hyperparameter of the model selection problem, not a technical implementation detail.

The kernel length scale, SDE timestep, reservoir spectral radius, and signature truncation depth are all parameterizations of this same hyperparameter. A principled approach to price modeling must either restrict to a specific resolution band or explicitly model the resolution-dependence by treating as a parameter of the statistical model rather than a fixed technical constant.

10. Synthesis

The four empirical investigations of Sections 4, 6, 7, and 8 all point in the same direction.

Variance ratio and Hurst. The variance ratio is below 1 at sub-second scales for both BTC and ETH, rejecting the GBM null with high statistical confidence. The Hurst exponent estimate at sub-second resolutions and at hourly resolutions. The scale at which the Hurst exponent crosses is approximately 10 to 60 minutes.

Autocorrelation structure. The signed-volume autocorrelation is negative at lag 1 ms for both assets, consistent with order-book microstructure mean reversion. The return autocorrelation at 1 s resolution is (), in quantitative agreement with the PolyBackTest analysis. At longer lags and coarser resolutions, the autocorrelations are not distinguishable from zero.

Wavelet decomposition. The wavelet variance profile is bottom-heavy: the bulk of the variance is at the finest decomposition levels (sub-100 ms). During the Q1 2025 BTC trending regime, the profile shifts toward coarser scales. This is consistent with the Hurst exponent evidence: trending behaviour is a coarse-scale phenomenon, mean reversion a fine-scale one.

Information geometry. The transfer entropy crossover at 15 to 20 ms confirms the lead-lag reversal finding from the companion post. The Fisher-Rao metric on the statistical manifold changes with resolution: the market's information geometry at millisecond scales is genuinely non-flat, while at hourly scales it approximates the flat Gaussian family.

Summary. No single model is adequate across all resolutions. The market at sub-second scales is mean-reverting, negatively autocorrelated, bottom-heavy in wavelet variance, and has ETH leading BTC in information flow. The market at hourly scales is approximately flat GBM, with no detectable autocorrelation, uniform wavelet variance, and BTC leading ETH in information flow. Any strategy, model, or pricing formula that ignores this resolution-dependence is making a false ontological commitment.

11. Conclusion

We began with the observation that the mid-price of a traded asset is the projection of a deterministic high-dimensional system onto an observable scalar, and the apparent stochasticity is generated by the temporal coarse-graining we perform when we bin trades into intervals of duration .

The empirical findings of this post quantify exactly how the projected noise changes with . At millisecond resolution, the noise has memory: it is negatively autocorrelated, mean-reverting, and has directional structure between assets. At hourly resolution, the memory is gone: the noise looks flat, Gaussian, and approximately independent. The transition happens over the range 10 ms to 60 minutes, and it is monotone.

The resolution-dependence arises directly from how a deterministic agent-interaction system looks when observed through a temporal filter. The individual order decisions that make up the price process are made by agents with information, objectives, and constraints; the randomness enters only when we aggregate many such decisions into a single log-return. At fine aggregation, the individual structure still shows through. At coarse aggregation, the central limit theorem has washed it away.

What does this mean for practice? A model that specifies the resolution at which it operates and makes claims only within that band is doing something honest. A model that claims resolution-independence, that the same drift, diffusion coefficient, or kernel applies from 1 ms to 1 hr, is making an empirically false claim that this post has documented in detail.

The geometric language is not decorative. The curvature of the state-space metric, the Fisher-Rao geometry of the statistical manifold, the diffusion distance on the data manifold, and the wavelet variance decomposition of path-space all formalize the same underlying intuition: at fine timescales, the market lives on a non-flat manifold, and the tools of flat Euclidean geometry, which is to say most of standard financial mathematics, are the wrong tools. At coarse timescales, the manifold flattens, and the standard tools become adequate approximations. The art is knowing which timescale you are in.

References

- Lo, A. W. and MacKinlay, A. C. (1988). Stock market prices do not follow random walks: Evidence from a simple specification test. Review of Financial Studies, 1(1), 41-66.

- Taqqu, M. S., Teverovsky, V., and Willinger, W. (1995). Estimators for long-range dependence: An empirical study. Fractals, 3(4), 785-798.

- Beran, J. (1994). Statistics for Long-Memory Processes. Chapman and Hall. Spectral characterization of long memory.

- Doob, J. L. (1953). Stochastic Processes. Wiley. Chapter 11: characterization of Gaussian Markov processes.

- Black, F. and Scholes, M. (1973). The pricing of options and corporate liabilities. Journal of Political Economy, 81(3), 637-654.

- Avellaneda, M. and Stoikov, S. (2008). High-frequency trading in a limit order book. Quantitative Finance, 8(3), 217-224.

- Gatheral, J., Jaisson, T., and Rosenbaum, M. (2018). Volatility is rough. Quantitative Finance, 18(6), 933-949.

- Lyons, T. J. (1998). Differential equations driven by rough signals. Revista Matematica Iberoamericana, 14(2), 215-310.

- Mallat, S. G. (1989). A theory for multiresolution signal decomposition: The wavelet representation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 11(7), 674-693.

- Percival, D. B. and Walden, A. T. (2000). Wavelet Methods for Time Series Analysis. Cambridge University Press.

- Coifman, R. R. and Lafon, S. (2006). Diffusion maps. Applied and Computational Harmonic Analysis, 21(1), 5-30.

- Amari, S. (1985). Differential-Geometrical Methods in Statistics. Springer. Fisher-Rao metric, Cramer-Rao as geodesic bound (Theorem 2.1), KL divergence as natural divergence to second order (Chapter 3).

- Amari, S. (2016). Information Geometry and Its Applications. Springer.

- Wiener, N. (1930). Generalized harmonic analysis. Acta Mathematica, 55, 117-258.

- Khinchin, A. (1934). Korrelationstheorie der stationaren stochastischen Prozesse. Mathematische Annalen, 109, 604-615.

- Li, X., Wong, T.-K. L., Chen, R. T. Q., and Duvenaud, D. (2020). Scalable gradients for stochastic differential equations. NeurIPS 2020.

- Kidger, P., Foster, J., Li, X., Oberhauser, H., and Lyons, T. (2021). Neural SDEs as infinite-dimensional GANs. NeurIPS 2021.

- Jaeger, H. (2001). The echo state approach to analysing and training recurrent neural networks. GMD Technical Report 148.

- Maass, W., Natschlaeger, T., and Markram, H. (2002). Real-time computing without stable states: A new framework for neural computation based on perturbations. Neural Computation, 14(11), 2531-2560.

- Chevyrev, I. and Kormilitzin, A. (2016). A primer on the signature method in machine learning. arXiv:1603.03788.

- Cont, R. (2001). Empirical properties of asset returns: stylized facts and statistical issues. Quantitative Finance, 1(2), 223-236.

- Lo, A. W. (1991). Long-term memory in stock market prices. Econometrica, 59(5), 1279-1313.

- Roll, R. (1984). A simple implicit measure of the effective bid-ask spread in an efficient market. Journal of Finance, 39(4), 1127-1139. Derives Cov(Δpt, Δpt-1) = -s²/4 for the bid-ask bounce; applied to NYSE stocks.

- Hasbrouck, J. (2007). Empirical Market Microstructure. Oxford University Press. Intraday variance ratio profiles for NYSE blue chips including IBM, GE, and Merck.

- Andersen, T. G., Bollerslev, T., Diebold, F. X., and Ebens, H. (2001). The distribution of realized stock return volatility. Journal of Financial Economics, 61, 43-76. Realized volatility for all 30 DJIA components; wavelet structure and regime shifts.